Sometimes you want to more carefully dictate what a search engine

robot sees when it visits your site. In general, search engine

representatives will refer to the practice of showing different content to

users than crawlers as cloaking, which violates the

engines’ Terms of Service (TOS) and is considered spam.However, there are legitimate uses for this concept that are not

deceptive to the search engines or malicious in intent. This section will

explore methods for doing this with cookies and sessions IDs.

1. What’s a Cookie?

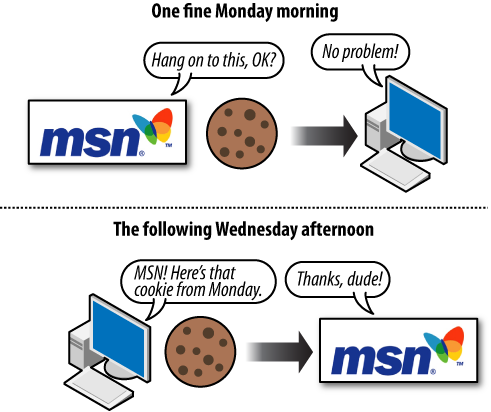

A cookie is a small text file that websites

can leave on a visitor’s hard disk, helping them to track that person

over time. Cookies are the reason Amazon.com remembers your username

between visits and the reason you don’t necessarily need to log in to

your Hotmail account every time you open your browser. Cookie data

typically contains a short set of information regarding when you last

accessed a site, an ID number, and, potentially, information about your

visit (see Figure 1).

Website developers can create options to remember visitors using

cookies for tracking purposes or to display different information to

users based on their actions or preferences. Common uses include

remembering a username, maintaining a shopping cart, and keeping track

of previously viewed content. For example, if you’ve signed up for an

account with SEOmoz, it will provide you with options on your My Account

page about how you want to view the blog and will remember that the next

time you visit.

2. What Are Session IDs?

Session IDs are virtually identical to

cookies in functionality, with one big difference. Upon closing your

browser (or restarting), session ID information is no longer stored on

your hard drive (usually); see Figure 2. The website you were interacting

with may remember your data or actions, but it cannot retrieve session

IDs from your machine that don’t persist (and session IDs by default

expire when the browser shuts down). In essence, session IDs are more

like temporary cookies (although, as you’ll see shortly, there are

options to control this).

Although technically speaking, session IDs are just a form of

cookie without an expiration date, it is possible to set session IDs

with expiration dates similar to cookies (going out decades). In this

sense, they are virtually identical to cookies. Session IDs do come with

an important caveat, though: they are frequently passed in the URL

string, which can create serious problems for search engines (as every

request produces a unique URL with duplicate content). A simple fix is

to use the canonical tag

to tell the search engines that you want them to ignore the session

IDs.

Note:

Any user has the ability to turn off cookies in his browser

settings. This often makes web browsing considerably more difficult,

and many sites will actually display a page saying that cookies are

required to view or interact with their content. Cookies, persistent

though they may be, are also deleted by users on a semiregular basis.

For example, a 2007

comScore study found that 33% of web users deleted their

cookies at least once per month.

3. How Do Search Engines Interpret Cookies and Session IDs?

They don’t. Search engine spiders are not built to maintain or

retain cookies or session IDs and act as browsers with this

functionality shut off. However, unlike visitors whose browsers won’t

accept cookies, the crawlers can sometimes reach sequestered content by

virtue of webmasters who want to specifically let them through. Many

sites have pages that require cookies or sessions to be enabled but have

special rules for search engine bots, permitting them to access the

content as well. Although this is technically cloaking, there is a form

of this known as First Click Free that search engines generally allow .

Despite the occasional access engines are granted to

cookie/session-restricted pages, the vast majority of cookie and session

ID usage creates content, links, and pages that limit access. Web

developers can leverage the power of concepts such as First Click Free

to build more intelligent sites and pages that function in optimal ways

for both humans and engines.

4. Why Would You Want to Use Cookies or Session IDs to Control

Search Engine Access?

There are numerous potential tactics to leverage cookies and

session IDs for search engine control. Here are many of the major

strategies you can implement with these tools, but there are certainly

limitless other possibilities:

Showing multiple navigation paths while controlling the flow

of link juice

Visitors to a website often have multiple ways in which

they’d like to view or access content. Your site may benefit from

offering many paths to reaching content (by date, topic, tag,

relationship, ratings, etc.), but expends PageRank or link juice

that would be better optimized by focusing on a single,

search-engine-friendly navigational structure. This is important

because these varied sort orders may be seen as duplicate

content.

You can require a cookie for users to access the alternative

sort order versions of a page, and prevent the search engine from

indexing multiple pages with the same content. One alternative

solution to this is to use the canonical tag to tell the search engine

that these alternative sort orders are really just the same

content as the original page .

Keep limited pieces of a page’s content out of the engines’

indexes

Many pages may contain content that you’d like to show to

search engines and pieces you’d prefer appear only for human

visitors. These could include ads, login-restricted information,

links, or even rich media. Once again, showing noncookied users

the plain version and cookie-accepting visitors the extended

information can be invaluable. Note that this is often used in

conjunction with a login, so only registered users can access the

full content (such as on sites like Facebook and LinkedIn). For

Yahoo! you can also use the robots-nocontent tag that allows you to

specify portions of your page that Yahoo! should ignore (Google

and Bing do not support the tag).

Grant access to pages requiring a login

As with snippets of content, there are often entire pages or

sections of a site on which you’d like to restrict search engine

access. This can be easy to accomplish with cookies/sessions, and

it can even help to bring in search traffic that may convert to

“registered-user” status. For example, if you had desirable

content that you wished to restrict, you could create a page with

a short snippet and an offer to continue reading upon

registration, which would then allow access to that work at the

same URL.

Avoid duplicate content issues

One of the most promising areas for cookie/session use is to

prohibit spiders from reaching multiple versions of the same

content, while allowing visitors to get the version they prefer.

As an example, at SEOmoz, logged-in users can see full blog

entries on the blog home page, but search engines and

nonregistered users will see only the snippets. This prevents the

content from being listed on multiple pages (the blog home page

and the specific post pages), and provides a positive user

experience for members.